The Agent Economy: A New Computational Paradigm

Alex Patow / May 6, 2025

TL;DR

The "agent economy" refers to a new computational paradigm driven by AI-powered autonomous agents that can independently perform tasks, make decisions, and engage in transactions on behalf of individuals and businesses.

This fundamental change in how computational systems interact will reshape how work is coordinated, decisions are made, and value is created.

This article examines the evolution from isolated AI systems to an interconnected agent ecosystem, exploring how standardized communication protocols, specialized hardware, and emerging technical capabilities are converging to create what may become one of the most significant economic and computational transformations of our era.

A Brief History of Agents

The concept of autonomous agents has deep philosophical roots, beginning with Alan Turing's 1950 paper on machine intelligence and John McCarthy's 1959 "advice taker" concept that explored computer systems capable of using logic to deduce new information and take actions.

The theoretical foundation for modern agent systems emerged in the 1970s with Carl Hewitt's Actor Model, which proposed computational entities that could communicate asynchronously.

The 1990s saw practical implementations through expert systems and agent communication languages like Knowledge Query and Manipulation Language (KQML). Microsoft's Office Assistant ("Clippy") represented an early consumer application attempt, despite its limitations.

Clippy: The O.G. Agent?

Academic research on multi-agent systems continued through the 1990s-2010s with frameworks like Swarm, Repast, and JADE. While these platforms pioneered standardized communication protocols and modular architectures, most applications remained in simulations rather than real-world deployment, due to factors such as market readiness, limited computing resources of the era, and insufficient reasoning capabilities within the agents themselves.

The 2000s brought web services and service-oriented architecture with protocols like SOAP and REST, breaking down monolithic applications into independently deployable services and setting the stage for distributed computational models.

Voice assistants emerged in the 2010s with Siri (2011), Google Assistant (2016), and Amazon Alexa (2014), offering natural language interfaces but with agency limited to pre-programmed functions.

The 2020s marked a fundamental shift with transformer-based large language models, beginning with the “Attention is All You Need” paper (2017). Early experiments like AutoGPT and BabyAGI in 2023 demonstrated potential for LLMs as reasoning engines, but were primarily focused on prompt chaining and task management rather than true agent functionality.

By 2024, dedicated agent frameworks emerged (LangGraph, CrewAI, and AutoGen), alongside Anthropic's Model Context Protocol (MCP), a proposed standard for managing access to additional context, moving beyond simple prompt chaining toward more sophisticated agent functionality.

Beyond Isolated Agents: Communication Standards Emerge (2025)

Interest around Google’s A2A is already seeing an incredible growth rate (reaching 10k stars on Github in 5 days vs. 12 weeks for MCP, indicating substantial community interest).

The introduction of agent communication protocols like the Agent-to-Agent (A2A) standard in 2025 represents an important milestone in this evolutionary path. These emerging protocols are facilitating the transition from isolated agents to an integrated agent ecosystem for several reasons:

Industry Collaboration: The support of over 50 major technology companies for standards like A2A demonstrates widespread industry recognition of the agent paradigm and a collective commitment to interoperability.

Addressing Integration Challenges: Enterprise software has long been plagued by integration problems. Open communication standards provide standardized ways for autonomous agents to work across organizational boundaries and software systems.

Enabling Composition Without Central Control: By establishing protocols for agents to discover and leverage each other's capabilities, these standards enable the composition of increasingly complex agent behaviors without requiring centralized coordination.

Just as earlier protocols like SOAP and REST enabled the API economy, emerging agent communication standards will likely form the foundation for the agent economy, allowing agents to discover each other, coordinate activities, and work together across organizational boundaries in ways that were previously impossible.

Inflections

A question that we ask ourselves at Inflection every day is "What are the profound shifts in technology, science and markets that are enabling novel behaviors at a vast scale?”

Cultural Inflections

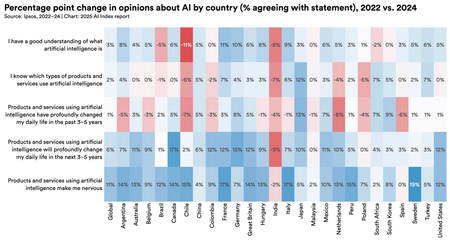

Public sentiment around AI usage (Stanford)

Global sentiment data reveals a profound shift in how AI is perceived. From 2022-2024, the Ipsos survey shows most countries increasingly believe AI products and services offer more benefits than drawbacks, with nations like France and Germany seeing 10% increases in positive sentiment. This transition from skepticism to acceptance represents a foundational inflection enabling novel behaviors at scale.

This inflection is most evident in enterprise adoption of autonomous AI agents. Johnson & Johnson now employs agents to optimize pharmaceutical solvent switches in drug discovery, while Moody's has developed 35 interconnected agents with distinct "personalities" that can reach different analytical conclusions on complex financial matters (WSJ Article).

Similarly, Inflection's own team of agents assist with deal sourcing and help with research. These examples demonstrate how organizations are evolving from viewing AI as mere tools to treating them as semi-autonomous team members with defined roles and responsibilities, creating human-AI collaborative ecosystems that will restructure knowledge work across industries.

Technical Inflections

The technological foundation for the agent economy rests on several key inflections:

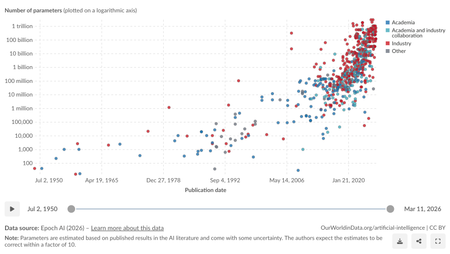

Increasing parameter counts (Our World in Data)

1) We've witnessed an exponential growth in model capability, with systems now exceeding 1 trillion parameters, delivering unprecedented performance in generating text, images, audio, and video. This scale-up has been accompanied by democratization through open source, allowing secure deployment of powerful models virtually anywhere.

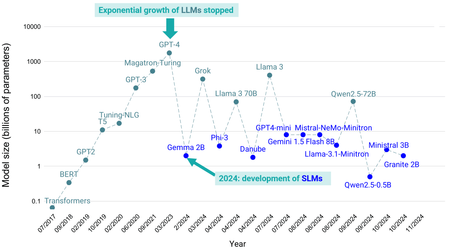

The rise of small language models (Objectbox.io)

2) Interestingly, 2024 has seen the emergence of a countertrend with "Small Language Models" (SLMs). Despite being larger than nearly all pre-2020 models, these SLMs represent a pivot away from ever-increasing parameter counts.

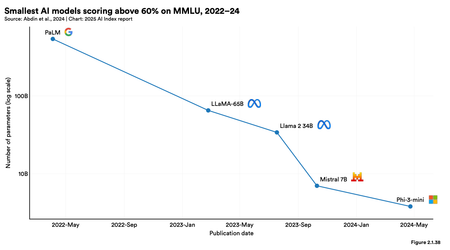

Recent small model performance increases (Artificial Intelligence Index Report)

This trend toward optimization rather than raw scale enables local-first agents that can run directly on consumer devices. The performance trajectory of these smaller models is impressive: some 8B parameter models now achieve over 60% on challenging benchmarks like Massive Multitask Language Understanding (MMLU), approaching the capabilities previously exclusive to flagship models but with dramatically reduced computational requirements.

3) The agent paradigm has been further accelerated by specialized architectures like Large Action Models (LAMs). Unlike traditional LLMs optimized for text generation, LAMs are specifically designed for tool use and action execution. This architectural specialization allows LAMs to outperform general-purpose LLMs of comparable size when it comes to completing multi-step tasks, making them ideal for autonomous agent applications. The work by Salesforce on xLAM exemplifies this trend toward purpose-built model architectures for specific agent capabilities.

Inference speeds with specialized ASICs (Groq)

4) Complementing these model advancements is the rapid evolution of inference hardware. Companies like Groq and Cerebras have developed Application-Specific Integrated Circuits (ASICs) achieving inference speeds up to 3x faster than traditional GPUs. This hardware acceleration fundamentally changes the economics and responsiveness of agent interactions, enabling real-time performance even with complex reasoning chains.

These technical inflections: trillion-parameter models, efficient SLMs, specialized action-oriented architectures, and accelerated inference hardware, combined with standardized A2A communication create the perfect storm of capabilities needed for the agent economy to flourish.

The Agent Technology Stack: Current State and Future Evolution

The following analysis examines five critical components of the agent technology stack, contrasting their current state with projected developments over the next decade, culminating in our vision for 2035. This timeframe allows for the necessary technical advances and market adoption cycles to realize the full potential of these technologies.

1. Intent Expression Systems

Current State: Today's programming paradigm remains rooted in explicit instruction-giving. Specialized frameworks like DSPy and LMQL provide abstraction layers for LLM integration, while IDE-integrated assistants (such as Cursor, Windsurf, and Github Copilot) supplement traditional programming. Tools such as Lovable, v0, and Bolt are pioneering natural language interfaces that enable software creation with minimal coding knowledge; they still primarily serve traditional software development workflows: generating, modifying, and maintaining conventional code bases.

Future Evolution: By 2035, computation itself will be fundamentally reimagined around human intent rather than explicit programming. These new intent expression systems will transcend traditional software development and become universal interfaces for directing both digital and physical systems:

Scientific research will be guided through high-level experimental design. Systems will understand protocols, manage lab automation, and autonomously adapt procedures based on emerging results.

Creative professionals will express high-level goals while systems handle technical implementation: Architects will specify buildings that automatically conform to structural and zoning requirements.

Manufacturing systems will understand intent throughout the entire process. They will translate high-level product specifications into detailed hardware optimizations, bridging human goals and physical production.

Business processes will be defined through intuitive interfaces. Systems will orchestrate data collection, analysis, and process automation while maintaining compliance and operational constraints.

These systems will understand more than syntax or semantics. They will incorporate deep domain expertise, including regulatory requirements, safety constraints, best practices, and complex interdependencies. This will enable true intent-to-execution workflows where human experts can focus entirely on their domain goals instead of computational implementation.

The distinction between programmer and domain expert will vanish as these intent expression systems become the primary interface for directing both computational and physical work. This represents a fundamental shift: computation will adapt to human thought patterns rather than humans adapting to computational thinking.

2. Infrastructure Primitives

Current State: Multiple well-capitalized durable compute startups (Temporal, Inngest, Modal, Trigger.dev) provide essential foundations for agent workloads by addressing fundamental limitations of traditional serverless functions. These platforms enable long-running processes, persistent state management, and workflow continuity. In parallel, specialized services like OpenRouter have emerged to help developers overcome reliability challenges in LLM routing and model selection.

Despite these advances, current infrastructure solutions remain centralized and don't adequately address the needs for fluid operation across different computing environments.

Future Evolution: By 2035, agent infrastructure will evolve into a distributed system with three distinct capabilities:

Adaptive Execution Location: Agents will intelligently shift processing between edge devices and cloud resources based on contextual factors (network conditions, privacy requirements, battery life), maintaining state during transitions.

Resource-Optimized Model Selection: Infrastructure will dynamically allocate computation across on-device, edge, and cloud resources. This includes using lightweight models locally for privacy-sensitive tasks, edge computing for regional needs, and cloud resources for complex reasoning. The system will optimize performance, efficiency, and responsiveness based on task requirements.

Decentralized Data Architecture: Local-first synchronization engines (AnyType*, Zero) will enable peer-to-peer data transfer between devices without requiring cloud centralization, allowing agents to maintain data consistency even with intermittent connectivity.

While realizing this vision will require solving complex challenges around maintaining consistency and reliability across highly distributed systems, the foundational building blocks are already emerging. This distributed infrastructure will support continuous agent operation across diverse computing environments, balancing privacy and security with performance while optimizing resource utilization for each specific task.

3. Memory Systems

Current State: Today's agents face fundamental memory limitations stemming from limited context windows. Current solutions include Retrieval Augmented Generation (RAG) systems for incorporating external knowledge, and early implementations of hierarchical memory models (Zep, Mem0). These systems typically run on conventional hardware using standard DRAM and high-bandwidth memory (HBM) designed for general GPU/TPU workloads rather than agent-specific memory patterns. The combination of software architectures not optimized for associative recall and off-the-shelf memory hardware restricts agents' long-term coherence and reasoning capabilities.

Future Evolution: By 2035, specialized hardware-accelerated memory systems will transform agent capabilities through:

Neuromorphic Memory: Hardware architectures inspired by the brain's neural structures that enable efficient associative memory and pattern recognition. Unlike traditional memory that requires exact addressing, neuromorphic systems can retrieve information based on similarity and context, enabling more human-like recall.

In-Memory Computing: Technology that performs calculations directly within memory units rather than transferring data to a separate processor. This eliminates the bottleneck of moving data between storage and computation, dramatically accelerating similarity searches and pattern matching operations essential for agent memory systems.

Persistent Memory Technologies: Advanced storage mediums that maintain state without power while offering speeds approaching RAM. These technologies bridge the gap between volatile memory and permanent storage, preserving agent context across sessions without time-consuming serialization and deserialization processes.

Though significant engineering challenges remain in translating these biological inspirations into production-ready systems, the potential benefits justify continued investment and development. These memory advances will enable agents with more human-like memory characteristics: contextual recall, intelligent forgetting, and the ability to form connections between seemingly unrelated concepts, without requiring constant reloading of context or expensive computation to access relevant information.

4. Compute

Current State: Agent computation today runs across CPUs and specialized AI accelerators like GPUs and TPUs. While these architectures are highly optimized for their primary workloads (CPUs for general computation, AI accelerators for parallel matrix operations), none are specifically designed for the unique demands of agent systems. This leads to inefficiencies as agents frequently need to coordinate across these different compute architectures.

Future Evolution: By 2035, specialized compute architectures designed specifically for agent workloads will emerge:

Heterogeneous Compute Orchestration: Systems that intelligently route different agent tasks to optimal hardware based on their computational profile, seamlessly bridging between AI operations and traditional code execution.

Custom Inference ASICs: Application-specific integrated circuits optimized for agent inference workloads running directly on edge and mobile devices, enabling sophisticated agent capabilities without cloud dependency.

Agent Communication Processors: Purpose-built silicon optimizing message parsing and routing between agents, dramatically reducing the overhead of inter-agent communication.

These specialized computing architectures will substantially reduce the computational costs of agent operations while enabling more sophisticated capabilities, making always-on agents economically viable for a broader range of applications.

5. Networking

Current State: As described earlier, the Agent-to-Agent (A2A) protocol has established a standardized way for agents to discover capabilities, coordinate activities, and work together across organizational boundaries. While this represents a significant advance in agent interoperability, the current JSON-based approach creates substantial overhead at scale, with verbose text-based protocols consuming excessive network resources during multi-agent communication.

Future Evolution: By 2035, agent networking will evolve from today's verbose text-based protocols to more efficient communication paradigms optimized for machine-to-machine interactions at scale:

Optimized Binary Protocols: Like the evolution from XML-SOAP to JSON-REST to gRPC in web services, agent communication will follow a similar optimization path, dramatically reducing bandwidth consumption and parsing overhead.

Hardware-Accelerated Authentication: Specialized circuitry for high-throughput credential verification will enable secure agent interactions across organizational boundaries without the computational overhead of constantly verifying credentials and evaluating complex permission trees.

Standardized Intent Schemas: A shared vocabulary of intentions and capabilities will enable more efficient negotiation between agents, allowing them to coordinate complex activities with minimal communication overhead.

Privacy-Preserving Communication: Secure information sharing mechanisms will enable agents to communicate effectively without exposing underlying sensitive data, balancing collaboration needs with privacy requirements.

These advancements will make agent-to-agent communication not only more efficient but also more secure and trustworthy, enabling truly autonomous collaboration across organizational boundaries.

Investment Opportunities

The agent technology stack presents a wealth of investment opportunities across multiple layers. From a deep-tech, pre-seed investment perspective, here’s what excites us the most:

Infrastructure Optimization

Purpose-built infrastructure optimized for agent workloads: will we see an agent-first cloud the same way EC2 got AWS started? (Agentuity)

Agent orchestration systems that distribute tasks optimally across different types of compute

Edge deployment orchestration systems that enable disconnected operation

Memory Systems

Neuromorphic memory architectures for efficient associative recall

In-memory computing solutions eliminating data transfer bottlenecks

Persistent memory technologies preserving agent state without serialization overhead

Compute Optimization

Dynamic model switching frameworks optimizing for specific workloads

Specialized inference chips for edge and mobile devices (Ubitium*)

Energy-efficient processors designed for agent operation patterns

Security and Information Sharing

Technologies enabling secure agent-to-agent communication without exposing underlying data or meta-data (Tune Insight*, Hedy)

Local computation frameworks that keep sensitive data within secure environments (AnyType*)

Context-awareness "bubbles" constraining information to certain areas and actions

Privacy-preserving techniques like federated learning, secure enclaves, and homomorphic encryption (Fabric*, Blyss)

Agent Discovery and Interoperability

Agent registries and discovery protocols enabling agents to locate, assess, and invoke one another (Synergetics)

Intent extraction and summarization techniques enabling more efficient coordination

Distributed trust verification systems allowing secure cross-organizational interactions

The most compelling opportunities lie at the intersection of these domains, where technological advances in one area can unlock capabilities across the entire stack.

If you’re building something in this space, please let us know!

*) Inflection portfolio company

Conclusion

The emergence of standardized agent communication protocols marks a fundamental inflection point in computing. Just as the Cambrian explosion was enabled by foundational biological building blocks, the agent economy is being unlocked by key technical advances across the entire stack: from intent expression systems to specialized silicon, from neuromorphic memory to agent-specific compute architectures.

This shift from isolated AI systems to interconnected agent networks represents more than an iterative improvement. It signals the emergence of truly autonomous computational systems that can collaborate, reason, and evolve without constant human intervention. The economic and societal impact of this transition will likely exceed previous computing paradigms, as it fundamentally transforms how we express intent to machines and how machines coordinate with each other.

The agent technology stack emerging today represents a complete reimagining of our computing infrastructure. Whether you're an investor, builder, or observer, now is the time to engage with the protocols and platforms that will define this next era of computation.

A tremendous thank you to Lele Cao from Microsoft for critical feedback and ideas. Our gratitude also goes to the many dozens of founders and researches who spent time with the Inflection team discussing the above themes over the last few years.